Introduction

In part 1, “Dig In and De-Mystify Tungsten Cluster Topologies,” we covered the various clustering topologies in a fair amount of detail.

In this short post we will highlight the key differences between Multi-Site Active/Active (MSAA) and Composite Active/Active (CAA) topologies.

The core principle behind an active/active topology is that you have more than one writable cluster.

So why do we have more than one type of Active/Active topology?

The key differences lie in the Connector behavior and the management of the cross-cluster replication:

- MSAA Managers see only the local cluster while CAA Managers see all clusters.

- MSAA cross-cluster replication requires a separate installation of the Tungsten Replicator software package while CAA cross-cluster replication is managed by the cluster Managers.

- MSAA Connectors have access only to the local cluster, while CAA Connectors have full read and write access to all clusters.

MSAA/CAA History

Composite Active/Active (CAA) was first released in version 6.0, and prior to that our only active/active solution was MSAA (called MSMM at that time, for Multi-Site Master-Master).

Internally at Continuent, once version 6.0 was released, it became convenient to refer to MSAA topologies as “v5”, and CAA as “v6”-style clustering. This is incorrect, because MSAA and CAA are both available for all versions of Tungsten Clustering starting with v6.0.

MSAA will remain a viable topology, even when we release v7.0!

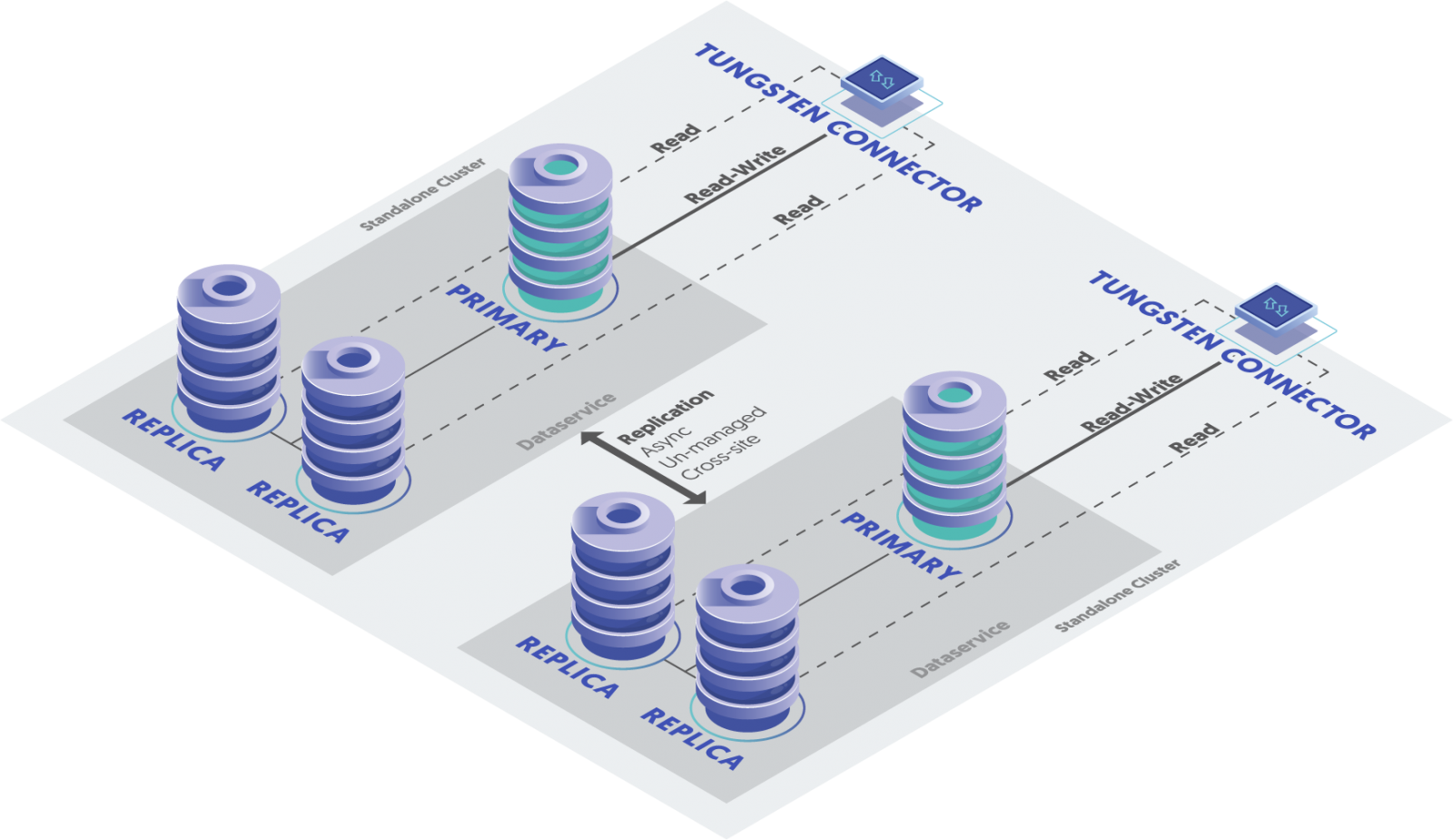

Multi-Site Active/Active (MSAA) Defined

In a Multi-Site Active/Active topology, writes are routed to the Primary MySQL node in the local Active cluster by the Connector. Replication between clusters is handled by a separate install of the Tungsten Replicator software acting on all nodes as a cluster-extractor.

- Un-managed cross-cluster replication

- MSAA requires two independent installations on each node, one for Tungsten Clustering and one for the standalone Tungsten Replicator (i.e. Tungsten Clustering installed into /opt/continuent and Tungsten Replicator into /opt/replicator)

- Connectors only know about one local cluster by default

- Managers see only the local cluster

- Each cross-cluster service must be manually defined in the configuration

- The cluster Managers have no knowledge of the cross-cluster replication services

- Cross-cluster services are named {FROM_TO} in the configuration but seen as {FROM} in `trepctl services/trepctl status`

- In MSAA, subservices must be defined as {FROM_TO} explicitly in the configuration (i.e. nyc_london), but in `trepctl status` and `trepctl services`, the service name will be the name of the remote (FROM) cluster service (i.e. nyc)

- Cross-cluster services are not visible or manageable via `cctrl`

- Each software packages must be configured separately for start at boot

- The same command exists in two different paths (i.e. trepctl, thl, tpm, dsctl etc. in both /opt/continuent and /opt/replicator directory trees)

- Available since v2.0.5

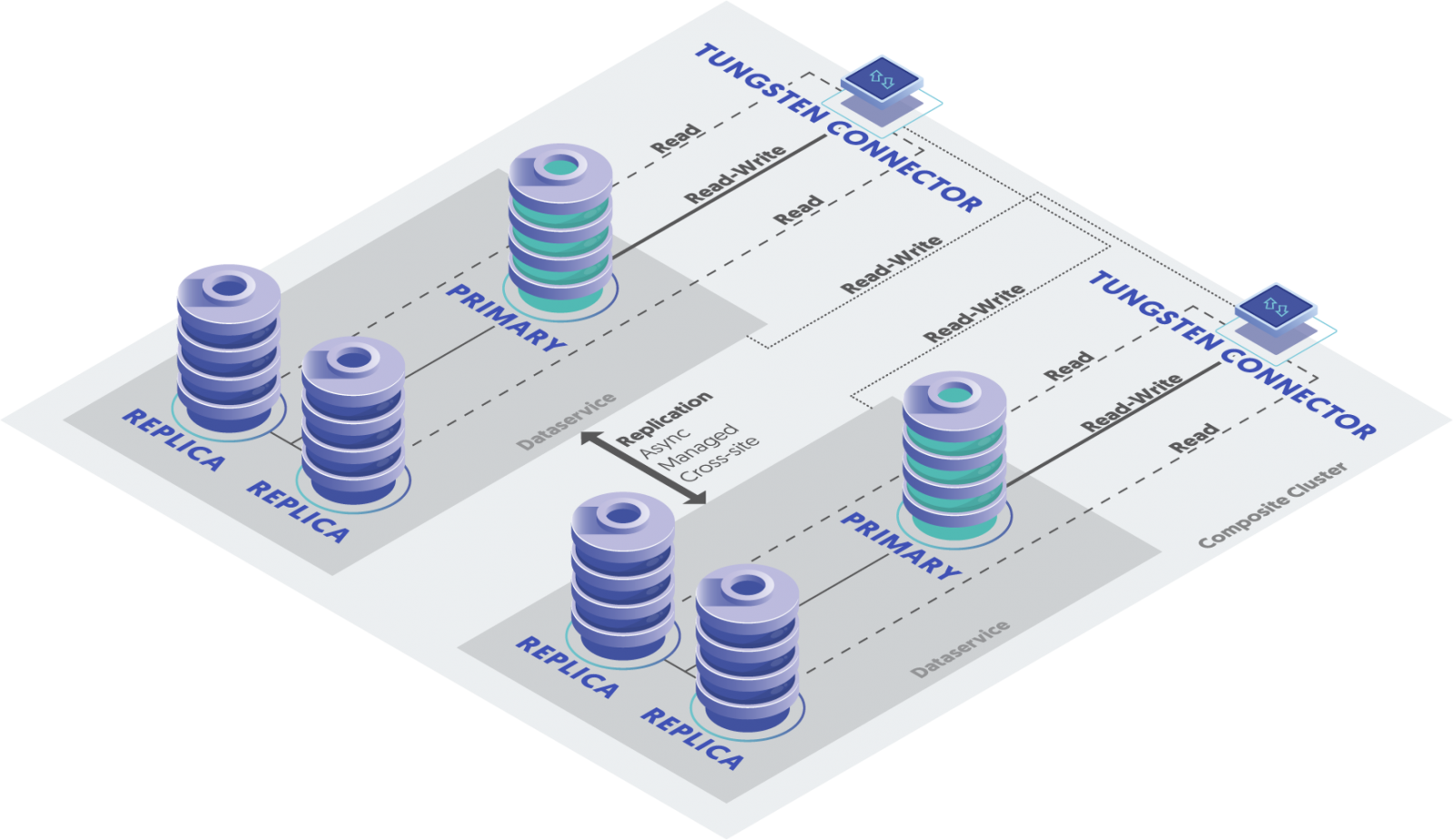

Composite Active/Active (CAA) Defined

In CAA, all clusters are Active, each containing a Primary writable MySQL host and some number of Replicas. There may be multiple Active clusters spanning physical locations and/or cloud providers.

- Key concept: Managed cross-cluster replication

- One Tungsten Clustering installation per node (/opt/continuent)

- The Tungsten Replicator software package is not needed at all

- One set of commands only (/opt/continuent)

- Managers see all clusters

- Connectors know about all clusters, both local and remote

- Connectors may be configured with read and write affinity at the cluster level (very powerful)

- Cross-cluster replication services are created automatically at install time

- Cross-cluster services are named “{LOCAL_SERVICE_NAME}_from_{REMOTE_SERVICE_NAME}”

- Example: `london_from_nyc`

- The cluster Managers fully control the cross-cluster replication services

- Cross-cluster services are fully manageable via `cctrl`

- Single software package to configure for start at boot

- First released in v6.0.0

- Entire cluster can be easily managed with Tungsten Dashboard, a powerful web-based GUI

Multi-Site Active/Active versus Composite Active/Active

To repeat - the key difference lies in the cross-cluster replication management - CAA is managed, and MSAA is not.

In a Composite Active/Active (CAA) topology, writes are routed to the Primary MySQL node in the local Active cluster by default, if it is available, and to another available Active cluster if not. This is a powerful capability because it allows database clients to be serviced by database clusters in multiple locations. MSAA Connectors are limited to the local cluster by default, and cannot automatically route writes to a remote cluster even when manually configured to allow knowledge of a remote cluster.

MSAA can be preferred over CAA

- if the cross-site latency is high

- if there are too many sites/regions - the Manager will bog down with too many sites/regions (the max number of sites/regions is variable based upon many factors)

- if there are too many Connectors - the Manager will bog down with too many Connectors

Wrap-Up

In this blog post we described the key differences between Multi-Site Active/Active (MSAA) and Composite Active/Active (CAA) topologies:

- MSAA Managers see only the local cluster while CAA Managers see all clusters.

- MSAA cross-cluster replication requires a separate installation of the Tungsten Replicator software package while CAA cross-cluster replication is managed by the cluster Managers.

- MSAA Connectors have access only to the local cluster, while CAA Connectors have full read and write access to all clusters.

For assistance with training or understanding your Tungsten Cluster, please get in touch via Continuent Support!

Comments

Add new comment