Overview

This is an in-depth follow-up to this blog post about our product terminology changes by Chris Parker. For more a general description of Active/Active vs Active/Passive MySQL clustering, please refer to this blog.

In this blog post we explore and de-mystify the various Tungsten Cluster Topologies by comparing the architecture and use-cases for each.

Core Concept: Cluster

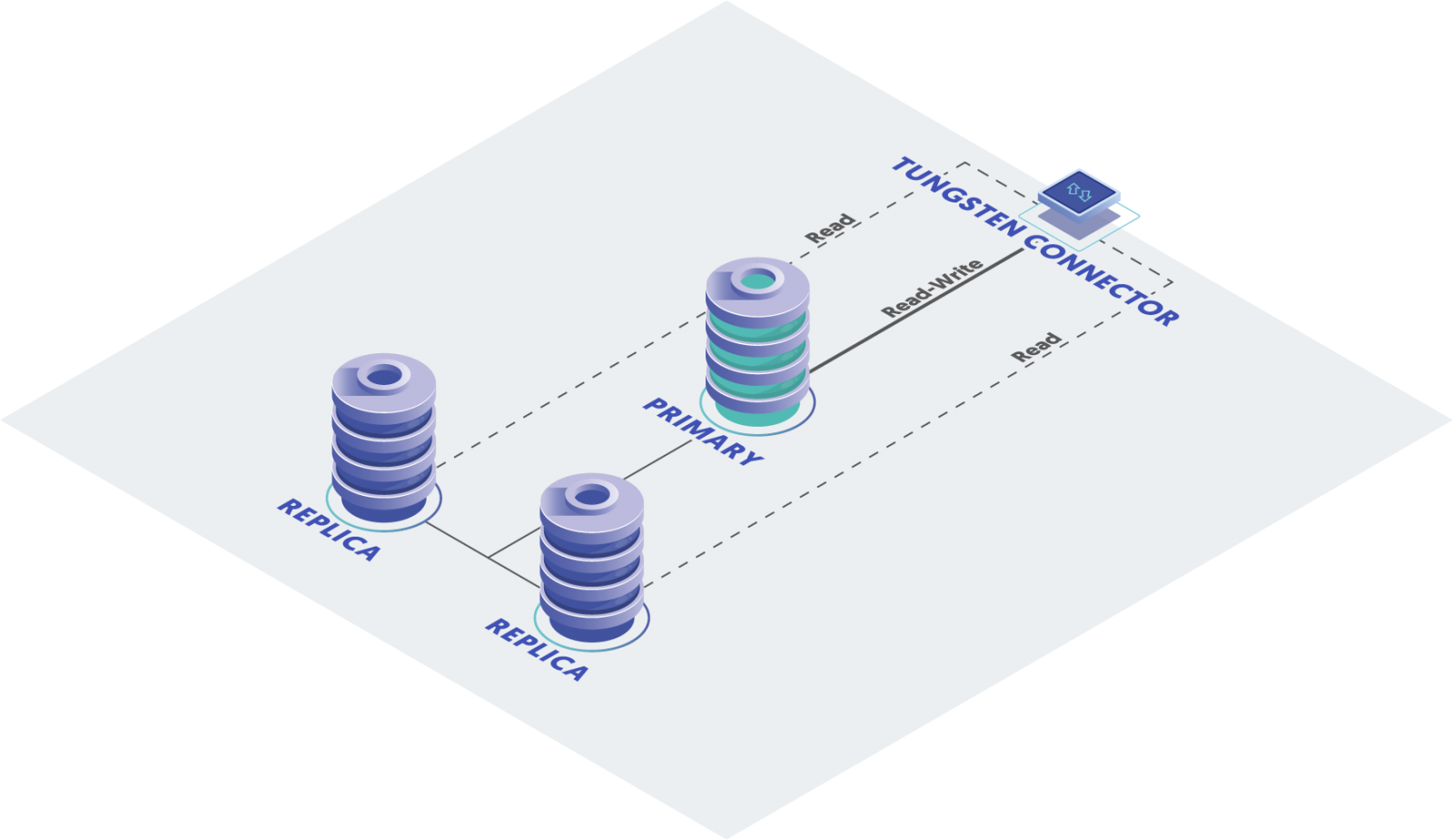

A cluster is a group of nodes running MySQL server managed by the Tungsten Clustering Suite of software. Each cluster contains one (and only one) Primary node and a minimum of two Replica nodes which contain an identical copy of the data from the Primary node.

In a cluster, replication is controlled by the Tungsten Manager, so we say that the "replication is managed" for that cluster.

When managed this way, we call the clusters “tightly-coupled”, because the Manager processes on each node share state with the other Managers, and coordinates the configuration of the replication pipeline sources and targets as nodes switch roles. The Managers also communicate status with the Connectors to indicate which nodes have which roles (i.e. primary or replica). This status allows the Connectors to route reads and writes to the appropriate nodes.

Core Concept: Composite Cluster

Next, a Composite cluster is a "cluster of clusters" or a "mesh", typically two or more clusters working together.

In a Composite cluster, all replication (both inside a single cluster and between clusters) is managed (tightly-coupled).

The key thing to remember is that Composite clusters have all replication pipelines managed.

The managed mesh may span geographic locations. You may span across Cloud providers (multi-cloud), and can even integrate on-premises hardware with Cloud-based instances (hybrid-cloud).

In any Composite cluster topology, each individual cluster may have either an Active role or a Passive role.

Active clusters contain a writable Primary node and some number of Replicas.

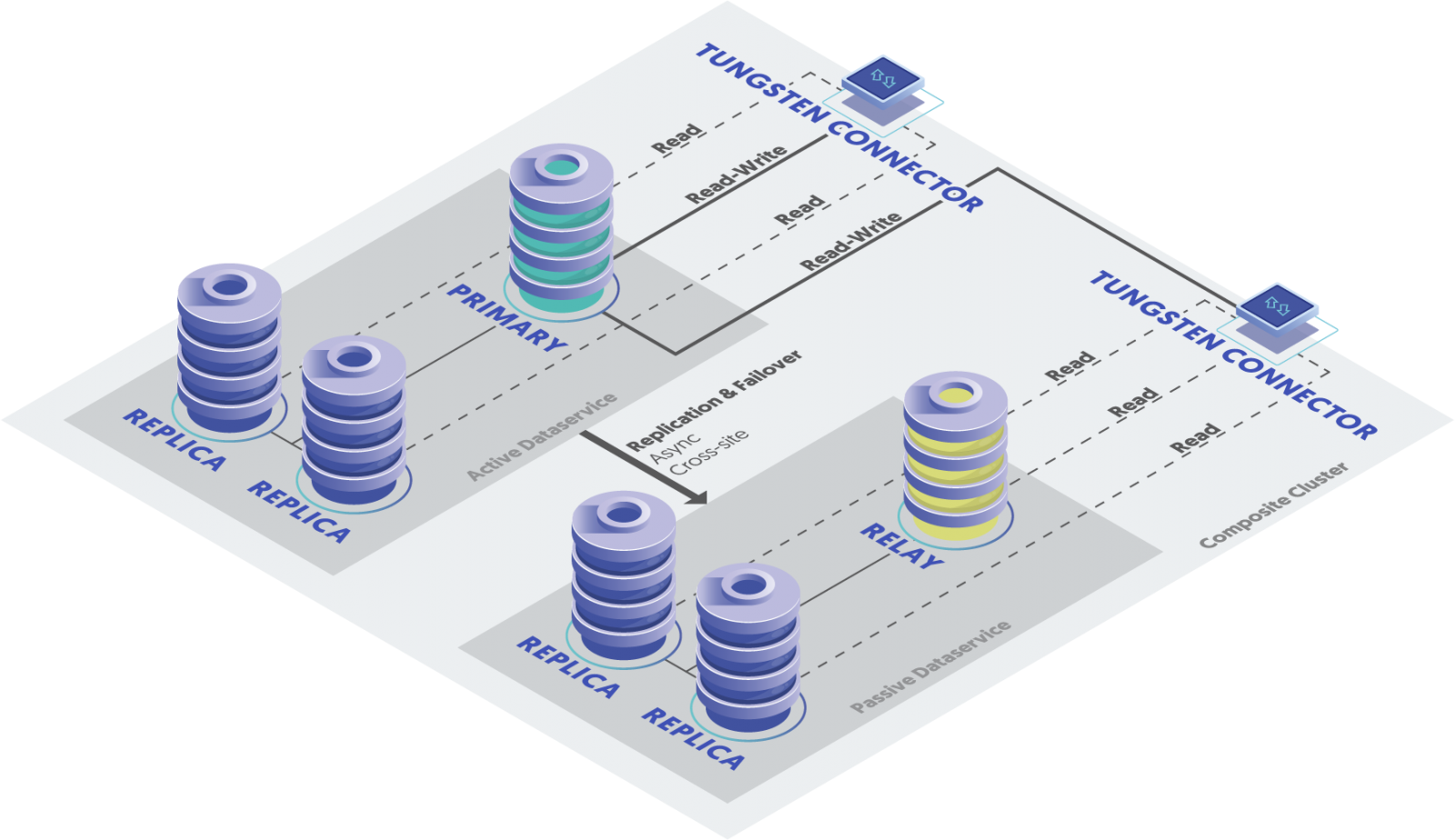

Passive clusters contain only Replica nodes and one special dual-purpose node called a Relay, which handles the pull of events from remote clusters in the mesh.

There are two types of Composite Clusters, Active/Active (CAA) and Active/Passive (CAP).

Tungsten Composite Active/Passive (CAP) Cluster Topology

SUMMARY: In a Composite Active/Passive topology, all writes are routed by the Connectors to the Primary node in the sole Active cluster.

In CAP, there is a single Active cluster containing the sole Primary writable MySQL host. There may be one or more Passive clusters.

The Connectors are multi-site aware and will automatically react to a site failover. Replication flows in one direction only, from the current Active cluster to the Passive cluster(s).

Since there is a single writeable Primary database node across all clusters, all writes are directed to that Primary by the Connectors in all clusters.

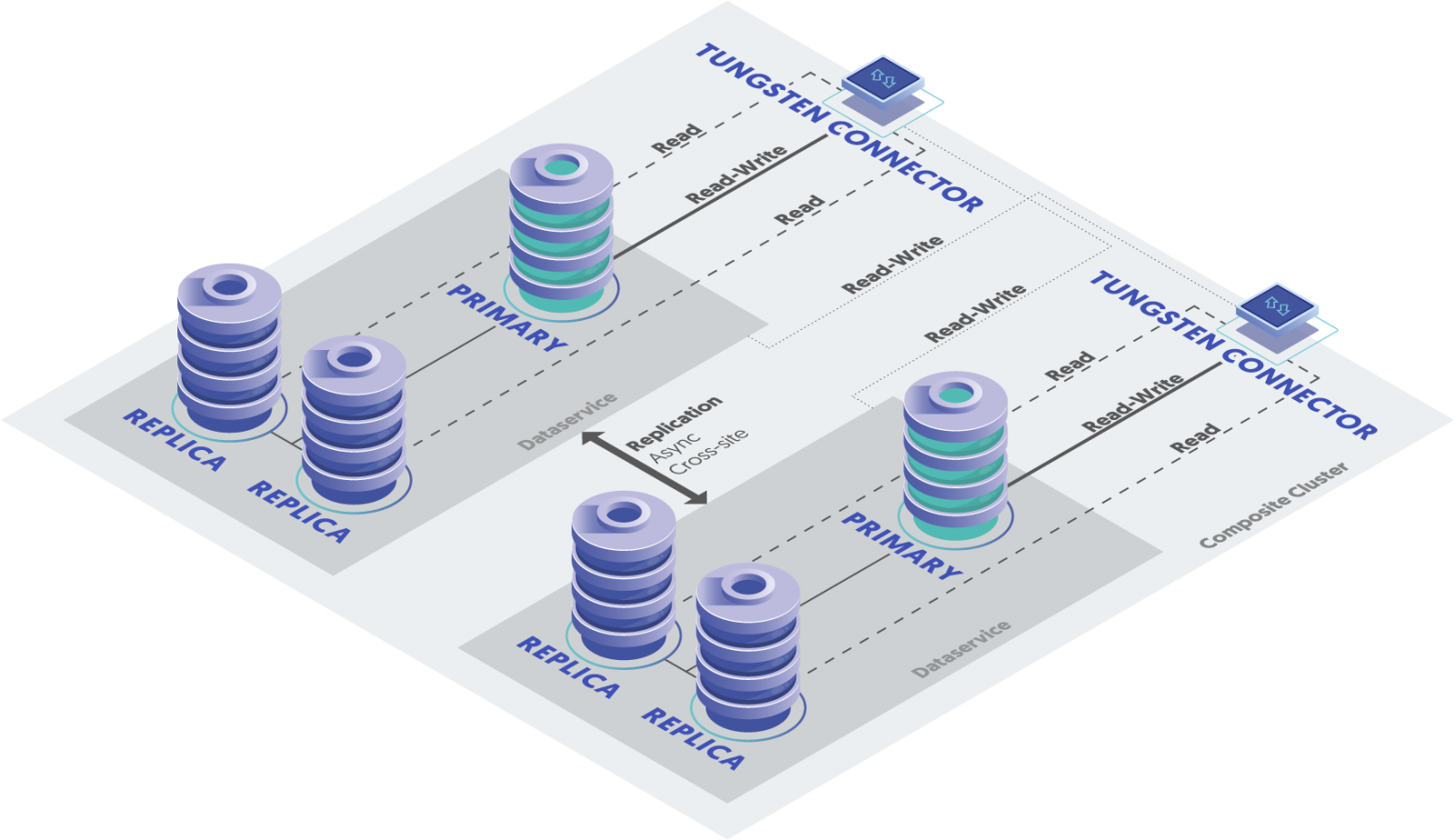

Tungsten Composite Active/Active (CAA) Cluster Topology

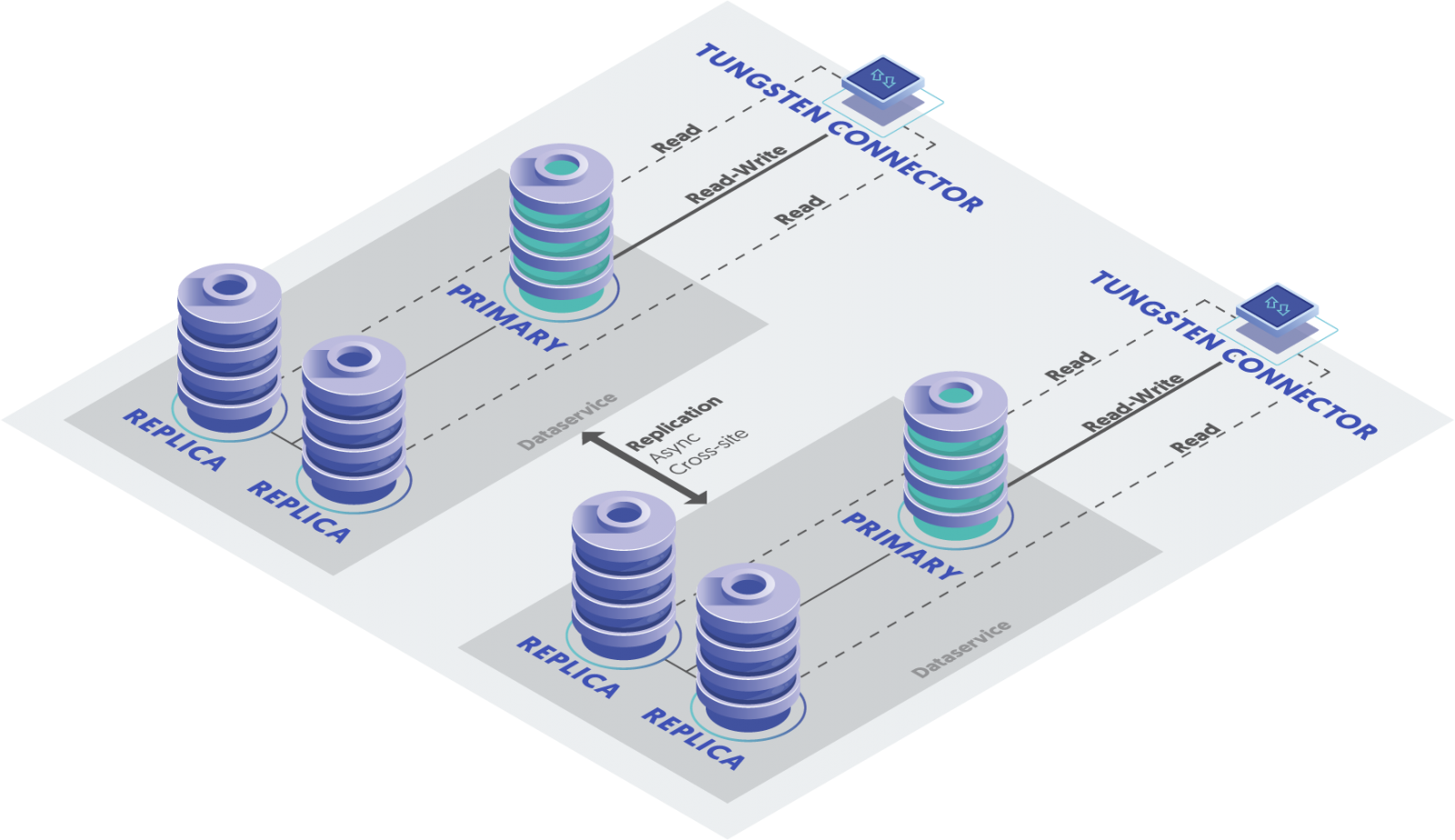

SUMMARY: In a Composite Active/Active topology, writes are routed to the Primary MySQL node in the local Active cluster, if available, and to another Active cluster if not.

In CAA, all managed clusters are Active, each containing a Primary writable MySQL host and some number of Replicas. There may be multiple Active clusters spanning physical locations and cloud providers.

The Connectors are multi-site aware and will automatically react to a site failover. Replication flows in ALL directions, so that every cluster pulls and applies THL from all other clusters in the mesh.

Since there are multiple writable Primary database nodes across all clusters, writes are directed to a Primary by the Connectors based on their configuration, typically to the local cluster first, and to another Active cluster’s Primary if not.

Core Concept: Multi-Site/Active-Active (MSAA)

SUMMARY: In a Multi-Site/Active-Active topology, writes are routed to the Primary MySQL node in the local Active cluster by the Connector. Replication between clusters is handled by a separate install of the Tungsten Replicator software acting on all nodes as a cluster-extractor.

Prior to Tungsten Clustering version 6, the only way to get bi-directional replication between multiple clusters was to install a separate, standalone copy of Tungsten Replicator in a different directory on every node and configure it as a cluster-extractor to pull and apply THL from all remote clusters.

The key thing to note here is that these cross-cluster replication pipelines are invisible to the Managers on each cluster, making them un-managed. That means that the cross-cluster replicators are configured and maintained separately from the cluster software. This also means that Connectors are unaware of remote clusters and will be unable to route traffic to a remote site in the event of a failure.

The fact that MSAA clusters are much more loosely-coupled has disadvantages and one distinct advantage.

Disadvantages

- Software admin must use two different sets of similar commands which is confusing

- Possible for the cross-cluster replicators to get out of sync with cluster roles

- Software upgrades must be managed separately

- The replicators are not visible to the Tungsten Dashboard because they are unmanaged

- Connectors are unaware of remote clusters and will be unable to route traffic to a remote site in the event of a failure.

Advantages

- Composite clusters require that the Managers on all nodes communicate with every other Manager. In clusters where there are very slow WAN links, the Managers do not perform as well. For clusters where sharing data is essential, but slow WAN links are creating an alerting nightmare, the more loosely-coupled MSAA may be exactly the correct answer!

Jargon and Nicknames

Internally, Continuent staff have often referred to the MSAA as “v5-style”, and the CAA as “v6-style”, because in version 5 and below, MSAA was the only way to achieve active/active replication between clusters.

As it turns out, the MSAA topology is just as viable in the version 6 software, so calling it “v5” is not correct.

If you catch us using that language, at least now you will understand why ;-}

The Concise View

Below is a table showing the list of topologies along with key information:

| Architecture Old Name, if any |

Connector View by default |

Description | Use-Case(s) |

|---|---|---|---|

| Standalone | Same cluster | A group of nodes running MySQL server managed by the Tungsten Cluster suite of software. Each cluster contains one (and only one) Primary and a minimum of two Replicas | Local high-availability and read-scaling in a single region or datacenter |

| Cluster-Extractor Cluster-slave |

None, no Connector | Cluster-aware replication OUT of one or more clusters to a single, standalone target | Move data inside a cluster to a non-clustered node for:

|

| Multi-Site/Active-Active (MSAA) Multi-Site/Master-Master (MSMM) |

Same cluster only | MSAA loosely-couples two or more standalone clusters with a separate installation of a Tungsten Replicator Cluster-extractor on each node to handle pulling and applying data from other clusters. Clusters act independently, and have no awareness of each other, unlike a Composite cluster, where the Manager controls all replication pipelines. | Geo-distributed active/active environments with very high-latency WAN links. |

| Composite Active/Passive (CAP) Composite Master-Slave (CMS) |

All clusters | In a Composite Active/Passive topology, all writes are routed by the Connectors to the Primary node in the sole Active cluster. | |

| Composite Active/Active (CAA) Composite Master-Master (CMM) |

All clusters | In a Composite Active/Active topology, writes are routed to the Primary MySQL node in the local Active cluster, if available, and to another Active cluster if not. |

Summary

Hope this blog post was able to make it easy to understand Tungsten Cluster topologies.

For more information, see this related blog post or contact us.

Comments

Add new comment